Project Name

How Ksolves Cut Telecom Costs by 60% with Confluent to Apache Kafka Migration

![]()

For telecom analytics platforms processing millions of real-time events every day, the cost of staying on a proprietary Kafka distribution is not just financial. It is strategic. A leading telecom analytics firm had built its data streaming infrastructure on Confluent Kafka (a commercial, enterprise-licensed distribution of Apache Kafka), but as its analytics workloads grew, so did the licensing bill. The cost had become one of the largest line items in the platform’s operational budget, and with no ceiling in sight, the business case for migrating to open-source Apache Kafka architecture was clear.

The client is a telecom analytics organization processing high-throughput telemetry data across 12 mission-critical applications, all operating 24×7. Their Confluent enterprise licensing was consuming 60% of the data platform’s annual operational budget, with costs compounding as new analytics use cases were added. With vendor lock-in limiting infrastructure flexibility and a cloud-hosted open-source alternative offering equivalent performance at a fraction of the cost, the decision to migrate was made at the executive level.

Ksolves brought its AI-first engineering approach to design a phased, zero-risk migration path, one that let the client validate the open-source cluster under real production load before cutting over a single application.

The client's Confluent environment presented five compounding challenges that required precise engineering to resolve:

- High Licensing Costs: The recurring Confluent enterprise licensing fee was significantly impacting the client's budget, with costs scaling directly with cluster growth. Migrating to open-source Apache Kafka offered a clear path to eliminating this cost entirely.

- Zero Downtime Requirement: The Kafka cluster served 12 mission-critical applications operating 24x7, including real-time network telemetry and billing analytics. Even minutes of downtime would disrupt downstream pipelines and risk SLA breaches across all dependent systems.

- No Data Loss Tolerance: The platform handled high-throughput telemetry data across 50+ active topics, where the integrity and continuity of every event stream were critical. Zero tolerance for data loss or message ordering violations was a hard requirement.

- Application Compatibility: All 12 producer and consumer applications were tightly integrated with the Confluent Kafka ecosystem. A migration that required code-level changes to any application would introduce unacceptable delivery risk and extend the project timeline significantly.

- Infrastructure Migration Complexity: Shifting from a Confluent-managed environment to a self-hosted, cloud-deployed open-source Kafka cluster required meticulous planning for resource provisioning, Schema Registry migration, security reconfiguration, and monitoring integration across all environments.

Ksolves used AI-assisted dependency mapping, configuration review, and integration validation to compress the discovery and planning phase from weeks to days. The migration was executed in eight structured phases, each designed to eliminate risk before the next phase began.

- AI-powered Initial Assessment: Ksolves identified all active Kafka topics, producers, consumers, and their interdependencies across the Confluent environment. Confluent-specific components including Schema Registry, access controls, and monitoring hooks were fully inventoried before any migration activity began.

- Open-Source Kafka Cluster Setup: A high-availability, open-source Apache Kafka cluster was provisioned on the client's preferred cloud platform, secured with TLS, SASL, and strict ACL policies, and engineered for robust resilience and message durability using optimal replication settings.

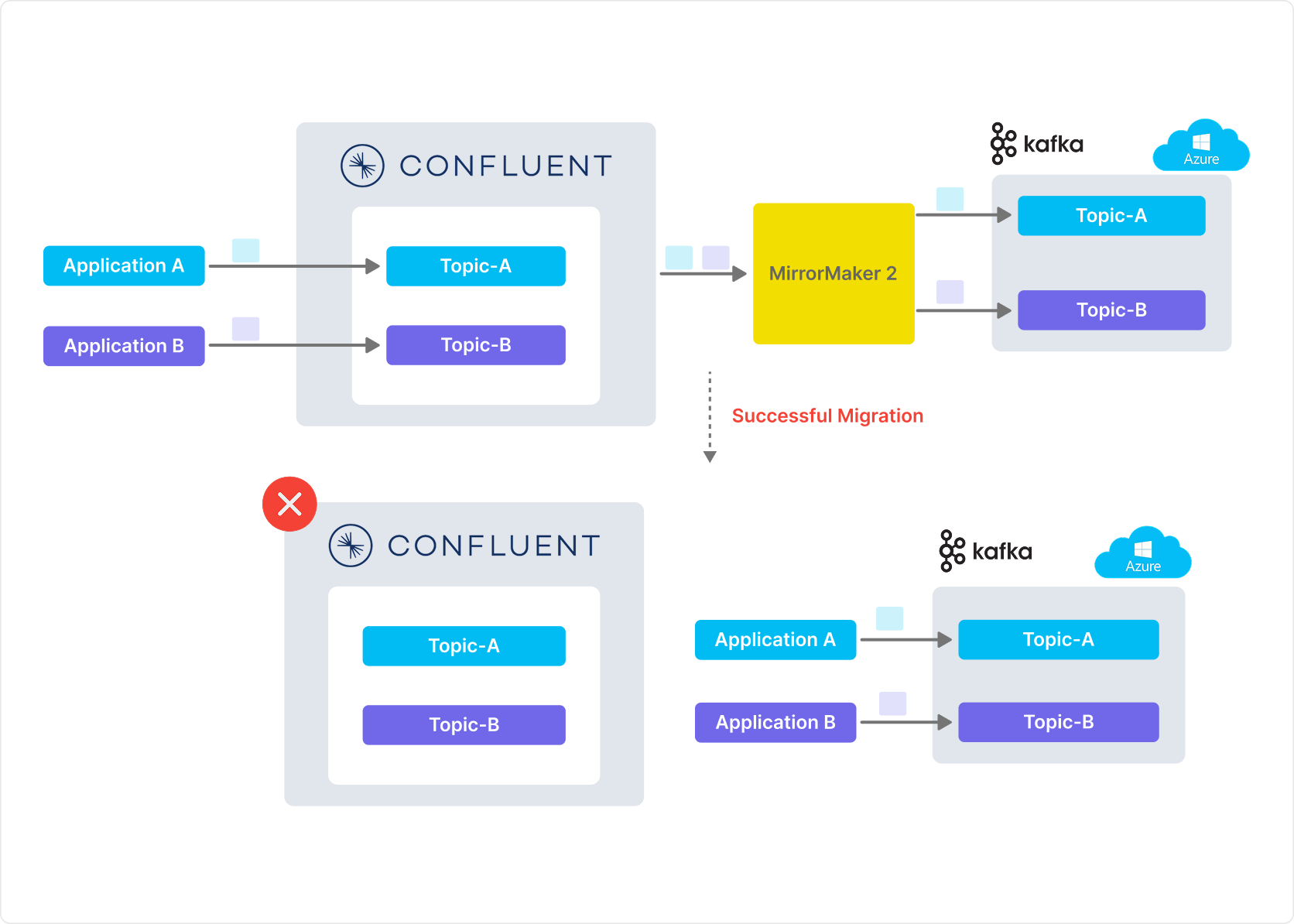

- Real-Time Replication Using MirrorMaker 2: MirrorMaker 2 was configured to enable real-time replication of all 50+ topics from the Confluent cluster to the open-source Kafka cluster, preserving message order, partition structure, and replication factors throughout. Replication lag was continuously monitored and maintained under 500ms throughout the migration window.

- Data Integrity Validation: Message integrity and order were verified between source and target clusters across all topics. Lag, throughput, and message consistency were monitored continuously to confirm replication quality before any application cutover was initiated.

- Phased Cutover Strategy: Applications were migrated to the new Kafka cluster in carefully planned stages, beginning with low-risk services to validate the target environment under real load before cutting over mission-critical workloads.

- Application Compatibility Assurance: All producer and consumer configurations were updated to point to the new cluster without requiring any code-level changes, preserving the client's existing application architecture entirely.

- Testing and Validation: End-to-end integration tests were executed across all 12 consumer groups, validating throughput, offset continuity, and message delivery accuracy before the final cutover was approved.

- Decommissioning Legacy Setup: After all services were verified on the new Kafka cluster, the legacy Confluent environment was safely decommissioned, with 24x7 post-migration monitoring in place to ensure stable operation in the weeks following cutover.

Technology Stack

| Component | Details |

|---|---|

| Source Platform | Confluent Kafka (Enterprise License) |

| Target Platform | Apache Kafka (Open Source), cloud-hosted |

| Replication Tool | Apache Kafka MirrorMaker 2 |

| Security | TLS, SASL, ACL policies |

| Monitoring | Prometheus, Grafana |

| Topics Migrated | 50+ active Kafka topics |

| Applications | 12 mission-critical producers and consumers |

| Migration Duration | 6 weeks, zero downtime |

| Replication Lag | Under 500ms throughout migration |

| Post-Migration Support | 24x7 dedicated monitoring |

The migration delivered measurable outcomes across cost, uptime, data integrity, and operational flexibility:

- 60% Reduction in Annual Licensing Costs: By eliminating Confluent enterprise licensing fees entirely, the client achieved a 60% reduction in data platform operating costs. The one-time migration investment was recovered within the first quarter of deployment.

- Zero Downtime Across 12 Mission-Critical Applications Over 6 Weeks: All 12 applications continued operating without a single service interruption throughout the phased migration. Zero SLA breaches, zero consumer group restarts, and zero operator-visible disruptions were recorded.

- 100% Data Integrity Validated Across 50+ Topics: Cross-cluster message validation confirmed 100% message-order preservation and zero data loss across all migrated topics before any application cutover was initiated.

- Zero Code-Level Changes Across All Applications: All 12 producer and consumer applications were migrated without a single line of application code modified, preserving the client's existing development investment entirely.

- Full Operational Flexibility Restored: With a cloud-hosted open-source Apache Kafka cluster, the client regained complete infrastructure control, eliminated vendor dependency, and established a scalable foundation that grows with analytics workloads at no additional licensing cost.

“We migrated every topic, every consumer, every producer to open-source Kafka without a minute of downtime or a single lost message. The licensing savings were immediate. The 24×7 post-migration support gave us full confidence in the new environment from day one.”

— Head of Data Platform, Leading Telecom Analytics Firm

By migrating from a licensed Confluent setup to a fully open-source Apache Kafka environment, Ksolves, with its AI-first delivery approach, delivered a 60% reduction in annual licensing costs, zero downtime across all 12 mission-critical applications, and 100% data integrity validation across 50+ migrated topics.

The 8-phase migration methodology, built around MirrorMaker 2 real-time replication, phased application cutover, and TLS/SASL security configuration, gave the client a path to open-source Kafka that eliminated risk at every step. With dedicated 24×7 post-migration monitoring in place, the client transitioned from a vendor-managed environment to full infrastructure ownership without a single disruption to live operations.

For organizations running Confluent Kafka and looking to take back infrastructure control, partner with Ksolves, a leading Apache Kafka development company, and find out what a cost-free migration path looks like for your cluster.

Is Your Confluent Kafka Licensing Bill Growing Faster Than Your Cluster’s Business Value?