Project Name

How Ksolves Built an AI-Accelerated Apache Iceberg Lakehouse That Gave 200+ Retail Stores Live Data in 60 Seconds

![]()

Every morning, the central data team at one of the Middle East’s largest retail conglomerates started their day the same way: opening dashboards that showed them yesterday. With 200+ hypermarkets running across the Middle East, Asia, and beyond, millions of POS transactions were flowing in daily. But by the time that data was processed, cleaned, and queryable, the trading day it described was already over.

Inventory decisions were reactive. Stock-out alerts arrived after shelves had been empty for hours. Pricing adjustments chased events that had already passed. And with each region running its own tax formats, currencies, and SKU structures, there was no clean path to a single version of the truth without rebuilding the data infrastructure entirely.

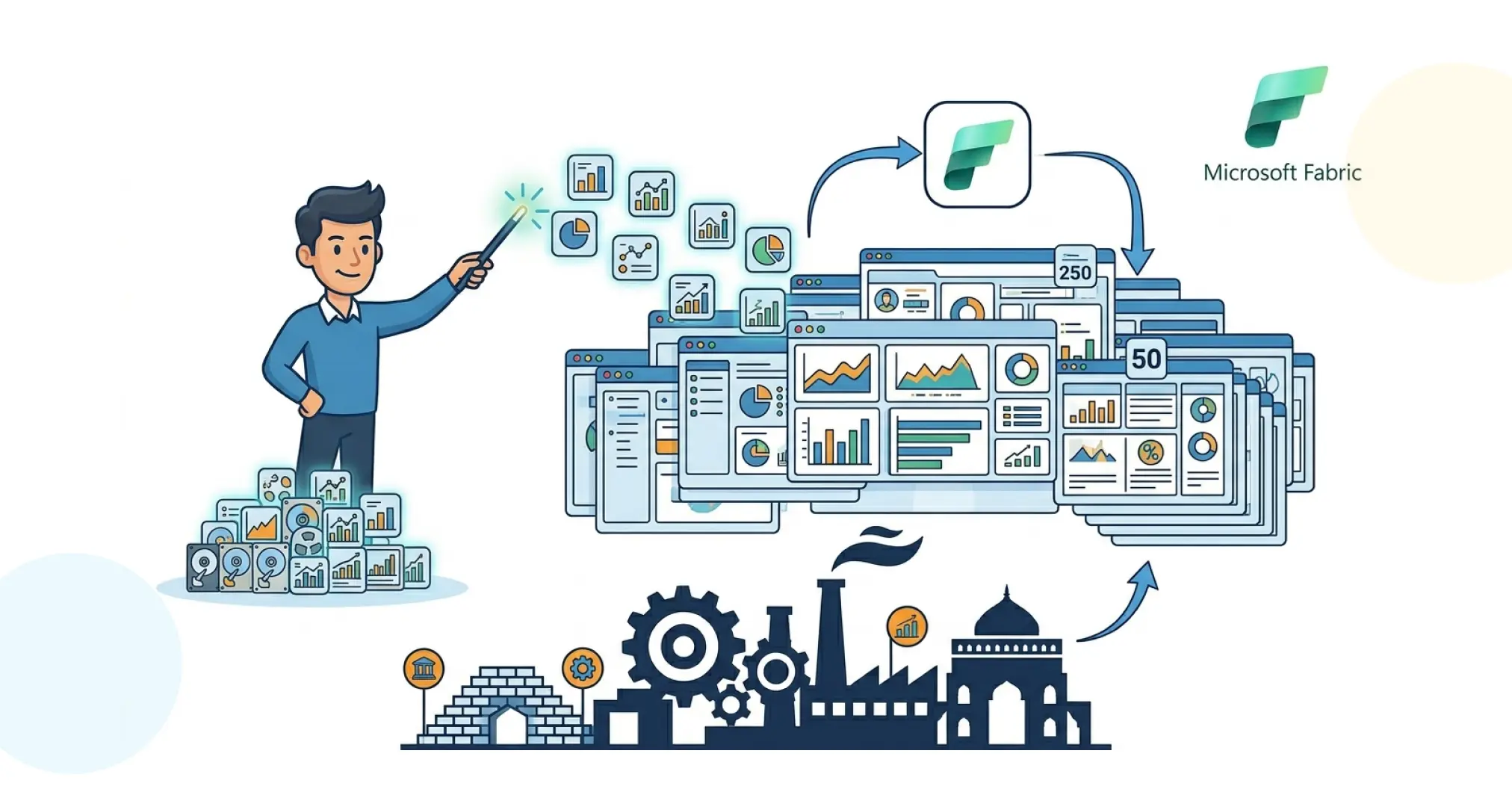

Ksolves designed and built a unified sovereign data lakehouse on Apache Iceberg, consolidating edge-level store data from 200+ sites into a real-time analytics platform. What previously took an internal team 7 weeks to scope and architect was completed in 5 weeks, with Ksolves consultants using AI tools throughout for processor development, schema design validation, and configuration testing.

The problems were not just technical. Each one carried a direct business cost.

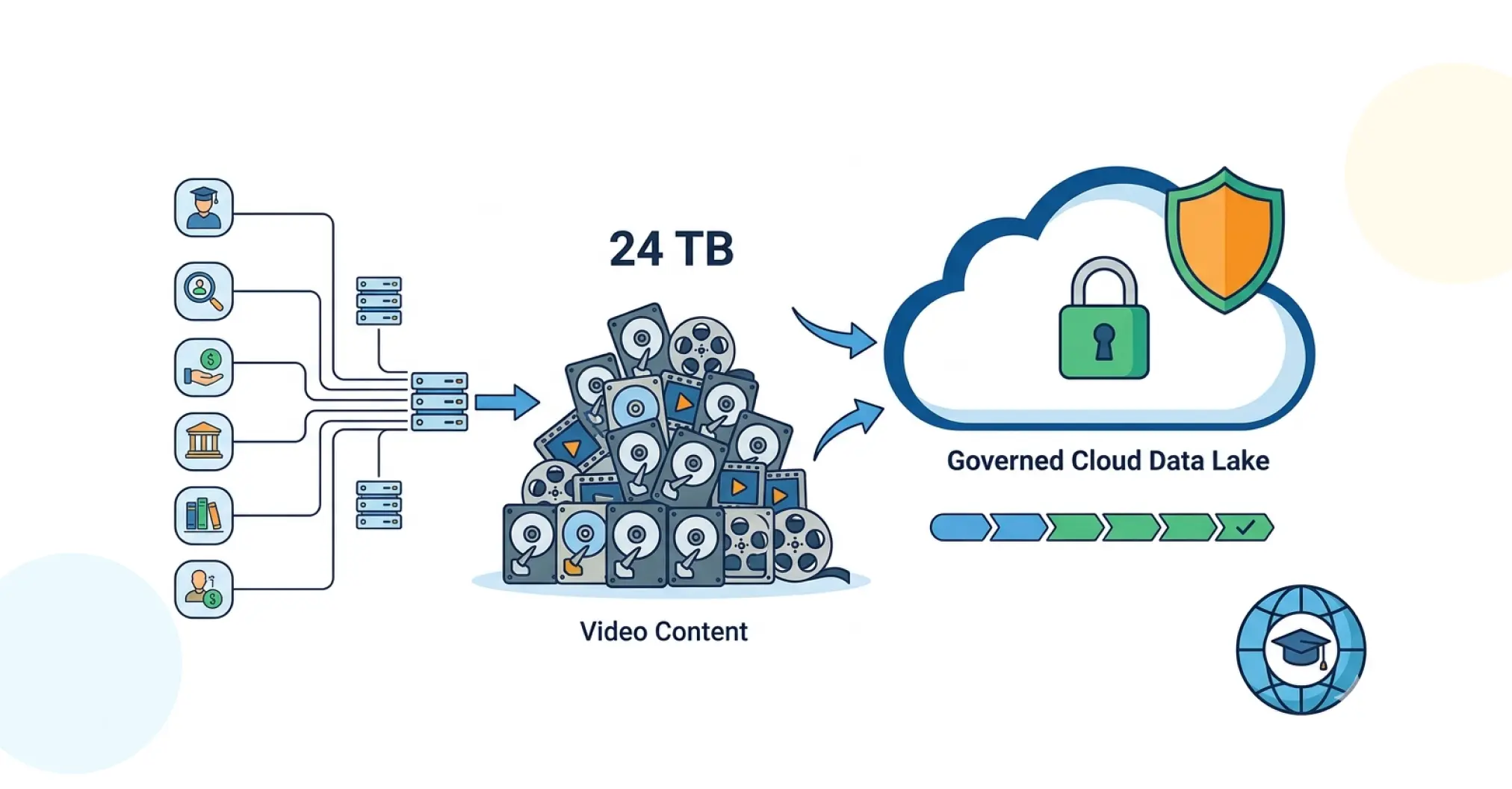

- Secure Global Ingestion: Hundreds of store-level POS systems were transferring sensitive transaction data to a central hub across public and semi-public networks. Every transfer was a potential exposure point. Security had to be enforced at the edge, not patched at the centre.

- Edge Transformation: Store logs arrived at the central cluster carrying raw noise: mismatched tax codes, regional currency formats, store-specific SKU conventions. The cluster was not built to absorb that kind of volume at that level of disorder. The data needed standardisation before it left the building.

- ACID Compliance at Scale: With millions of daily sales records feeding financial systems, a partial write was not a data quality issue. It was a reconciliation failure. The legacy system was producing an average of 14 such errors per month, each one requiring manual investigation and correction.

- Regional Data Sovereignty: Regional managers operated under strict contractual and regulatory access controls. A store manager in Dubai could not see Kuala Lumpur's numbers, and vice versa. Multi-tenant data isolation was not a feature request. It was a legal requirement.

- Ksolves built the architecture around one principle: clean data at the edge, unify at the centre. Rather than pulling raw, noisy POS data into a central cluster and cleaning it there, Ksolves deployed Apache NiFi at each store as a frontline data processor: As Apache NiFi development experts, the team built custom processors for each of the 12 regional tax schemas, handling currency conversion, tax normalisation, and SKU mapping locally before a single record left the site. Building those processors would typically take 18 days of development and QA. Using AI tools for code generation and test scaffolding, the team completed the same work in 11 days.

- Real-Time Lakehouse Transition: Spark Structured Streaming 4.1.1 consumes cleaned Kafka topics and writes directly into Apache Iceberg 1.10.1 tables. Iceberg's time-travel capabilities allow the platform to query inventory states at any historical timestamp, which is essential for financial reconciliation across peak trading periods and regulatory audits.

- High-Performance Querying: Trino 480 runs sub-second SQL queries directly against Iceberg tables on MinIO, removing the need for a separate data warehouse entirely. Queries that previously waited for overnight batch jobs now execute against live Iceberg tables within seconds of a transaction occurring. That is where the 24-hour lag disappears.

- Unified Visualisation with Governed Access: Apache Superset 6.0.0 connects via Trino to deliver real-time dashboards to every regional management tier simultaneously. Keycloak 26.6.0 enforces SSO with per-region RBAC, using custom realm isolation per territory. Each regional manager sees exactly their authorised scope and nothing else.

Technology Stack: NiFi, Kafka, Spark Structured Streaming, Iceberg, Trino, MinIO, Superset and Keycloak

| Component | Version | Role |

|---|---|---|

| Apache NiFi | 2.4.0 | Edge processing at each store; centralised config push for pricing and tax logic across 200+ sites via Parameter Contexts and Git-based flow versioning |

| Apache Kafka | 3.9.2 | Multi-node broker; ingestion backbone between NiFi edge and Spark Structured Streaming |

| Apache Spark (Structured Streaming) | 4.1.1 | Real-time Kafka consumption; direct writes to Iceberg tables |

| Apache Iceberg | 1.10.1 | Open table format; ACID transactions, time-travel audit, schema evolution for multi-format POS data |

| Trino | 480 | Federated query engine; sub-second SQL across MinIO-stored Iceberg tables |

| MinIO | 2025 release | On-premises S3-compatible object storage; replaces legacy enterprise data warehouse storage |

| Apache Superset | 6.0.0 | Real-time dashboard layer; connects via Trino for live regional analytics |

| Keycloak | 26.6.0 | SSO and RBAC; per-region realm configuration for multi-tenant data isolation |

- Operational Agility: A configuration change that once required a 3-day manual rollout across individual store systems now takes under 4 minutes via NiFi's centralised parameter contexts. New pricing logic, updated tax rules, or regulatory changes go live across all 200+ stores before the next trading hour opens.

- Financial Accuracy: Apache Iceberg's ACID transactions produced zero partial-write events across 18 months of production, including four peak holiday periods with transaction volumes running 60% above the daily average. The legacy system had been averaging 14 financial reconciliation errors per month. That number is now zero.

- Cost Efficiency: Moving to on-premises MinIO storage eliminated legacy enterprise data warehouse licensing costs, reducing annual storage infrastructure spend by approximately 40%, an estimated saving of $420,000 per year at the client's current volume of 11 TB/month.

- Real-Time Visibility: Time-to-insight dropped from 24 hours to under 60 seconds. Regional managers across the Middle East and Asia now have real-time inventory visibility during trading hours. Stock-out alerts that once arrived the morning after now arrive within a minute of a transaction occurring.

MODERN DATA LAKEHOUSE ARCHITECTURE STACK

“We were making inventory and pricing decisions based on data that was a day old. In retail, that is a lifetime. Our regional managers can now see live dashboard updates from stores across three time zones within a minute of a transaction happening. The NiFi edge deployment was the piece we had not considered. Cleaning data at the store level before it reaches the central platform was the right call, and the financial accuracy improvement alone justified the entire engagement.”

— Group Chief Data Officer, Global Retail Conglomerate (Anonymised per NDA)

From 24-hour batch lag to sub-60-second real-time inventory visibility across 200+ stores. That is the standard Ksolves sets for global retail data lakehouse engagements, and it is replicable for any distributed retail operation regardless of regional complexity. With 12+ years of experience, a team of experienced Apache NiFi developers, testers, and architects, and a 90% client retention rate, Ksolves brings the same depth to every engagement. Whether you are building from scratch, upgrading an existing NiFi deployment, or evaluating an open-source big data lakehouse consulting approach as an alternative to your current data warehouse, Ksolves can scope the right architecture for your needs.

Modernize your data architecture with Ksolves!