Frequently Asked Questions

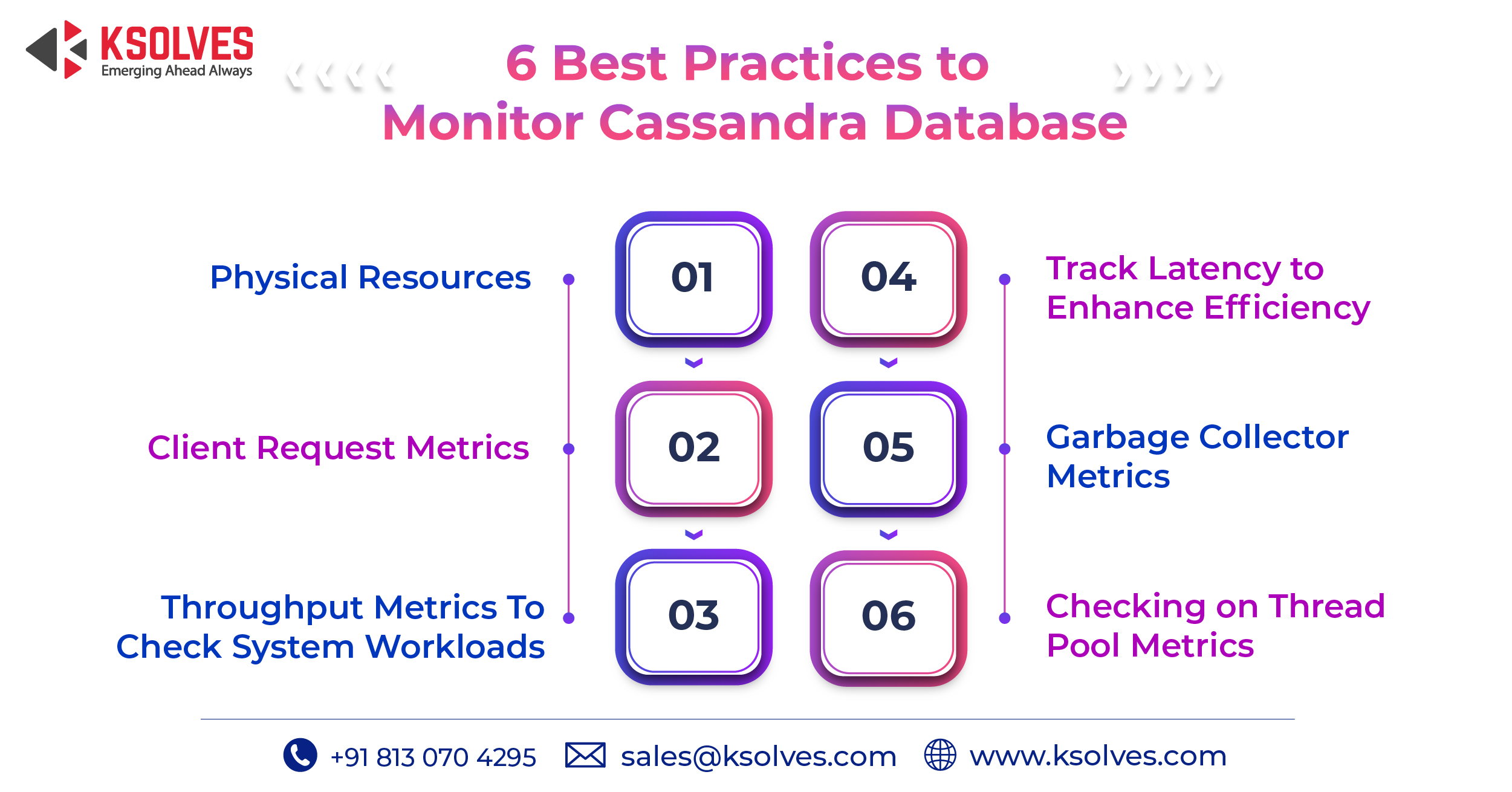

What is Cassandra monitoring and why is it important?

Cassandra monitoring is the continuous tracking of key performance metrics – such as CPU utilization, read/write latency, throughput, garbage collection pauses, and thread pool activity – across all nodes in a Cassandra cluster. It is critical because Cassandra operates as a distributed NoSQL database where a single node failure or resource bottleneck can cascade into inconsistency, data loss, or application downtime. Without active monitoring, teams often discover performance problems only after they have already impacted users.

What happens if a Cassandra node goes down and is not repaired promptly?

When a Cassandra node is down for more than the default hinted handoff window – typically three hours – it misses writes that were held on other nodes. If the node is not repaired after it rejoins the cluster, it will return stale or inconsistent data to clients. Over time, this can lead to silent data inconsistencies and increased repair overhead, making proactive node monitoring and immediate alerting essential for cluster health.

How do you monitor read and write latency in Apache Cassandra?

Cassandra exposes read and write latency metrics through Java Management Extensions (JMX), which can be collected by tools such as Prometheus with the JMX Exporter, Grafana, or DataStax OpsCenter. Best practice is to track both 99th percentile and mean latency values separately for reads and writes, and set alerting thresholds so that rising latency is detected before it reaches the level that signals the cluster cannot fulfill client requests. Ksolves provides Cassandra support services that include real-time monitoring setup and latency tuning for production clusters.

What is the difference between throughput monitoring and latency monitoring in Cassandra?

Throughput monitoring measures the volume of read and write operations a cluster handles per second, helping teams identify whether the cluster is being overloaded. Latency monitoring, on the other hand, measures how fast individual requests are completed. A cluster can have high throughput with acceptable latency under normal load, but when throughput exceeds capacity, latency increases sharply. Monitoring both together gives a complete picture of cluster health and the right moment to scale by adding nodes.

Why do garbage collection pauses affect Cassandra performance?

Apache Cassandra runs on the Java Virtual Machine (JVM), which periodically pauses application threads to perform garbage collection (GC). During these pauses, Cassandra cannot process requests, which directly increases latency for any in-flight client reads or writes. Prolonged or frequent GC pauses indicate that heap memory is under pressure, often caused by misconfigured JVM settings or excessive object allocation. Monitoring GC pause duration and frequency – and setting alerts on them – is one of the six core Cassandra monitoring best practices.

What are Cassandra thread pool metrics and when should they raise an alert?

Cassandra uses a set of internal thread pools to handle different types of operations, such as reads, writes, compaction, and gossip. Thread pool metrics expose the number of active, pending, and blocked tasks in each pool. A blocked task count greater than zero is a warning signal that the pool is saturated and requests are being dropped or delayed. Teams should set alerts to trigger whenever blocked task counts rise above zero, as this directly indicates internal bottlenecks that degrade user-facing performance.

Who can help implement Cassandra monitoring best practices for enterprise clusters?

For teams managing large-scale or mission-critical Cassandra deployments, Ksolves offers dedicated Apache Cassandra support and development services. As a DataStax-certified team, Ksolves helps enterprises set up real-time monitoring pipelines, configure JVM and GC alerting, tune read/write latency thresholds, and implement proactive repair schedules – all tailored to the specific workload and infrastructure of each client.

Have more questions about Cassandra monitoring? Contact our team for a free consultation.

AUTHOR

Apache Cassandra

Anil Kushwaha, Technology Head at Ksolves, is an expert in Big Data. With over 11 years at Ksolves, he has been pivotal in driving innovative, high-volume data solutions with technologies like Nifi, Cassandra, Spark, Hadoop, etc. Passionate about advancing tech, he ensures smooth data warehousing for client success through tailored, cutting-edge strategies.

Share with